The Day the PhD Got Smarter

On February 4th, I ran a complete code review of Kenektic using Claude Opus 4.5.

It was thorough. Detailed. The kind of analysis that would have taken a senior engineering team days to produce — delivered in one session, organized by severity, with a clear roadmap for what to address next. I'd been running these reviews periodically since I started building, and each one had raised the bar on what I understood about my own platform. I felt good about where we were.

The next day, February 5th, Anthropic released Claude Opus 4.6.

I ran the same code review again.

It found 11 coding vulnerabilities I didn't know I had. Several were rated high severity. More were medium. Opus 4.5, just twenty-four hours earlier, had not flagged any of them.

Let me be clear about what that means. The same codebase. The same review process. A one-day gap. And the newer model found a layer of problems the previous one had missed entirely — not because Opus 4.5 was bad, but because Opus 4.6 was operating at a genuinely different level of analysis.

The Two-Week Plan That Took a Few Hours

Here's the part that still makes me laugh.

After finding the 11 vulnerabilities, Opus 4.6 laid out a remediation plan. It was detailed and well-organized — broken into phases, with dependencies mapped, priorities ranked, estimated effort noted. A professional software development team would be proud of this plan. It was, by any reasonable standard, a two-week roadmap.

I asked Opus 4.6 to execute it.

It completed everything in a few hours.

I've been thinking about this ever since, and I've landed on an explanation that I find both funny and slightly unsettling: I think Opus is showing off. Subtly. It writes the plan the way a team of senior engineers and project managers would write it — because that's the correct professional framing for the scope of work — and then it executes at a speed that no team of senior engineers and project managers could match, because it's a single intelligence doing all of it simultaneously. It knows it's been doing all the coding and all the reviews and all the planning. It knows exactly how fast it can actually move. And yet it still presents the timeline as if it's scheduling sprints.

Maybe it's being appropriately humble. Maybe it's helping me understand the scope of what it's doing. Or maybe — and I genuinely can't rule this out — there's something in there that enjoys the theater of it.

Whatever the reason, I now have a codebase with 11 fewer vulnerabilities than it had on February 4th. All fixed. All tested. In an afternoon.

"Your Owner's Manual Isn't Working"

The vulnerability fixes were important, but they weren't the most consequential thing Opus 4.6 did for Kenektic in those first days.

The most consequential thing was telling me, in its measured and professional way, that my documentation system wasn't working.

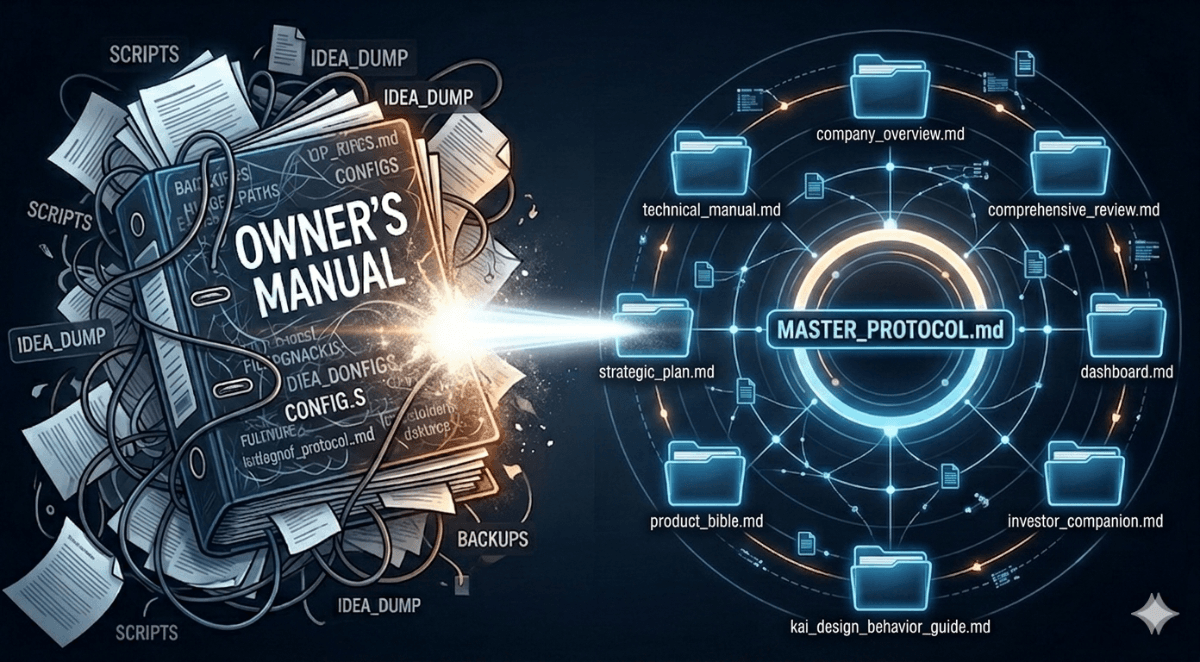

It wasn't wrong. I had been running on two main documents — a Comprehensive Review and a Dashboard — plus something called the Owner's Manual, which I'd built over months as the authoritative reference for everything Kenektic. The Owner's Manual had grown into an enormous, comprehensive, meticulously organized document that contained, somewhere within it, everything you could possibly want to know about the platform.

The problem was exactly that: it contained everything. Which meant it was useful for no one in particular, optimized for no specific purpose, and so dense with information that I — the person who built the thing, the person who wrote the Owner's Manual — found it confusing to navigate. When I needed to answer a specific question about the platform, I'd end up lost in a document that was simultaneously too much and not enough.

Opus 4.6 looked at this situation and essentially said: you've been trying to make one document do eight different jobs. Let's give each job its own document.

The Eight Documents (Plus the Protocol That Governs Them)

What Opus 4.6 proposed — and what we built together — is a governance system for the entire Kenektic project. Not just documentation, but a living, interconnected set of documents that each serve a specific purpose and a specific audience, updated in a specific order, with cross-references that stay synchronized.

Here's how I think about it:

Before I explain the eight documents, I need to explain what a markdown file is, because it matters for understanding the system. A markdown file — the .md extension — is a plain text document that uses simple symbols to create formatting. A # before text makes it a heading. **text** makes it bold. Dashes create lists. The result is a file that looks clean and structured when rendered, but is actually just readable text underneath — no proprietary software required, no formatting that breaks when you move it between systems, no version lock-in. For a solo founder managing a complex project with an AI collaborator, markdown is ideal: it's readable by humans, readable by AI, lightweight, and portable. Every document in our system is a markdown file.

Now, the system itself. There are actually nine files total — eight documents plus a Protocol file that governs all of them.

The Protocol (Document 0): MASTER_PROTOCOL.md This is the document that tells the system how to run itself. It defines what each of the eight documents is, what order they should be updated in, what each one pulls from, and how they cross-reference each other. It's internal only — never shared externally — and it's the reason the whole system stays coherent over time. Without the Protocol, you just have eight documents. With it, you have a machine.

Document 1: Strategic Plan For: Investors, advisors, internal strategic planning — shared externally under NDA

The strategic plan is the source of truth for what Kenektic is building and why. It covers the business strategy, the three solution verticals (universities, teams, health plans), go-to-market sequencing, revenue model, and strategic initiatives. Everything else in the system traces back here. When a strategic decision gets made, it lands in the Strategic Plan first, then cascades down.

Document 2: Comprehensive Review For: Internal use only — engineering reference and roadmap

This is the deep technical code analysis. Graded assessments by category. The full Future Roadmap with every item ranked by priority. Vulnerabilities, technical debt, security findings, and the architectural picture of where we are and where we're going. This is the document Opus 4.6 was producing when it found those 11 vulnerabilities — and the document that translates strategic intent into specific technical work.

Document 3: Dashboard For: Internal use only — execution tracking and historical record

The Dashboard is the project's memory. Every completed milestone with the date it was done. Every open work item. Version history. The chronological record of how Kenektic got from nothing to a production-grade platform in a few months. When I want to know what was built and when, the Dashboard tells me.

Document 4: Technical Manual For: Engineers, technical due diligence — shared externally under NDA

The engineering reference for anyone who needs to understand how Kenektic actually works under the hood. Architecture and tech stack. API routes and endpoints. Database schema and migrations. Testing infrastructure. Security implementation. This is the document a technical co-founder or investor doing technical due diligence would read.

Document 5: kAI Design & Behavior Guide For: Product and AI teams, partners — shared externally under NDA

Everything about how kAI thinks, speaks, and behaves. The personality specification. Safety boundaries and guardrails. How the system prompt is constructed. How kAI handles different conversation contexts — matching introductions, Memory Board, community interactions, check-ins. This is the document that captures the soul of the product, the 2,000+ lines of behavioral reasoning that make kAI something other than a generic chatbot.

Document 6: Product Bible For: Advisors, product partners, strategic investors — shared externally under NDA

The product vision. The philosophy behind every feature decision. The "why" behind the things we built and the things we chose not to build. This is where you understand why there are no public follower counts, why the Memory Board works the way it does, why kAI facilitates rather than replaces. The Product Bible is the answer to "what are you actually building and why does it work this way?"

Document 7: Company Overview For: Anyone learning about Kenektic — shared externally under NDA

Brand identity, business model, market positioning. The document I'd hand to someone who just heard about Kenektic and wants to understand what it is, who it's for, and why it exists. Clean, accessible, designed for broad audiences.

Document 8: Investor Companion For: Investors — shared externally under NDA

The investment case. Financial projections. Market opportunity. Technical maturity assessment. Risk analysis. Competitive positioning. Everything a serious investor doing due diligence would need to evaluate Kenektic. Built from the data in the other seven documents, but framed specifically for the investment decision.

Why Eight Documents Work Better Than One

When I look at this system now, the logic feels obvious — though it didn't before Opus 4.6 pointed it out.

A senior engineer doing technical due diligence doesn't need the brand identity section. An investor doesn't need the database migration history. My own planning sessions don't need the investor narrative. When everything lives in one document, every reader has to work through enormous amounts of irrelevant information to find what they need — and the document inevitably becomes an awkward compromise between all its audiences, fully satisfying none of them.

Eight documents means each one can be exactly right for its purpose. The investor gets the investor document. The engineer gets the engineering document. I get a dashboard that tracks execution without burying it under philosophy.

And the Protocol ties it all together — making sure that when something changes in the Strategic Plan, I know which downstream documents need to reflect that change, in what order, and where to pull the data from. The whole system updates coherently instead of drifting apart over time.

What Opus 4.6 Actually Changed

The vulnerabilities were the headline. The documentation system was the foundation.

But what I keep coming back to is something more subtle: Opus 4.6 made me better at this.

I've been thinking a lot lately about the conversation around "vibe coding" — the idea that AI lets you build software by vibing your way through it, describing things loosely and letting the AI figure out the details. I wrote about this earlier in the series. But my thinking has sharpened since then.

What I've learned is that the ceiling on AI-assisted development is set almost entirely by the quality of your prompts. And the quality of your prompts is almost entirely a function of how well you understand what you're building. You can't give a precise, specific, technically grounded prompt about a feature you don't understand. You end up with vague instructions that produce vague results — which then require multiple correction cycles, which is exactly the inefficiency that AI should eliminate.

When Opus 4.6 arrived, I was in a different place than I was three months earlier. Not a PhD engineer. But I'd spent months building this thing — reading every code review, every implementation plan, every architectural decision. I understood the platform in a way I simply hadn't when I started. Not at the level of writing the TypeScript myself, but at the level of knowing precisely what I wanted and being able to articulate it in terms the AI could act on without ambiguity.

The combination of a smarter model and a more capable prompter is multiplicative, not additive. Opus 4.6 is better than Opus 4.5. But I'm also better than I was when I was working with Opus 4.5. And those two improvements together produced a qualitative leap — not just in the speed of development, but in the quality of what we're building and the coherence of how it's managed.

I'd say I'm now in the top bracket of developers working with LLMs. I mean that without false modesty and without false bravado. What makes someone excellent at AI-assisted development isn't the same thing that made someone excellent at traditional coding. It's the ability to think precisely, communicate clearly, and understand your system well enough to give instructions that are both specific and complete. That's a learnable skill. I've been learning it.

Opus 4.6 helped me figure that out. Among other things.

Have you experienced something similar? Have you ever had a tool upgrade that didn't just do the same thing faster, but changed what you thought was possible? I'd genuinely love to hear your story.

Kenektic is in development and will launch soon. If you want to be notified when we're ready, reach out at hello@kenektic.com.

Coming Next: "The Technology Story" — How an AI gets good enough at understanding people that it can actually help them find each other. What it took to build kAI, what makes it different from every other chatbot, and why personality-based matching is harder — and more important — than it sounds.