$510 in One Week. Here's How I Cut That to Almost Nothing.

I'm looking at my Anthropic billing dashboard on a Sunday morning, coffee in hand, feeling pretty good about life. Maya met Sarah. The virtual beta worked. The conversational intelligence layer was doing exactly what I'd hoped.

And then I count the charges.

Six of them. Each one around $85. In one week.

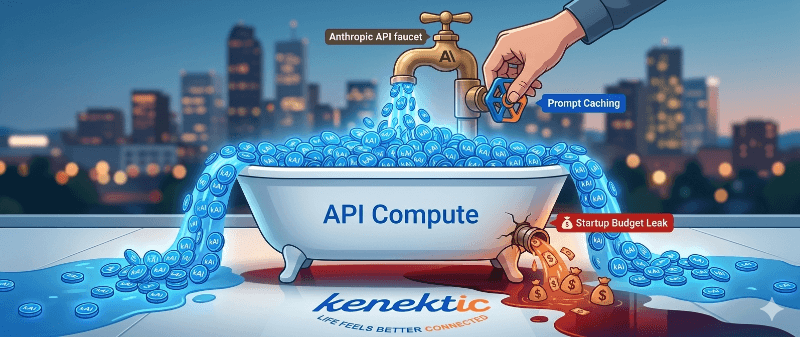

Here's what happened: I'd set my account to auto-reload — top it back up to $100 any time my balance dropped below $15. Seemed sensible at the time. What I hadn't calculated was what happens when you run fifty AI-powered personas, each having multiple conversations per simulated day, simultaneously, with the full kAI system processing every exchange. My account was draining and refilling like a bathtub with a slow leak and an automatic faucet. I'd spent $510 before I even noticed.

Six hundred dollars for the whole simulation, as I mentioned last week. Not unreasonable for what it produced. But $510 of that in a single week made it suddenly, viscerally real: compute is going to be my biggest ongoing expense when real users start talking to kAI. And if I didn't understand exactly where the money was going, I was going to have a very unpleasant conversation with myself every time I checked the dashboard.

I spent the next week going very deep. What I found were four separate problems — and four solutions that, together, cut my cost per message by about 97%.

Where the Money Actually Goes

When kAI responds to any message, it's doing several expensive things at once.

First, it reads its instructions — the entire behavioral system I've built: conversational techniques, emotional intelligence rules, safety guidelines, personality frameworks. Every time. For every user. For every message. That instruction set runs about 17,000 tokens, which is roughly 50 pages of material kAI has to process before it can say a word.

Second, it reads everything it knows about you — your personality profile, your conversation history, your interests, your emotional state, your matches. A fresh assembly, unique to each user, on every call.

Third, for certain conversations, it reaches into an external database — pulling relevant psychology research, memories from past conversations, patterns from previous interactions. More tokens. More API calls. More cost.

The token is the unit of currency in AI — roughly three-quarters of a word — and you pay for every one on both sides of every call. Running fifty personas through a simulated month of daily conversations meant millions of tokens a day. Of course the bathtub was draining.

Fix #1: Stop Re-Reading the Same 50 Pages Every Time

kAI's behavioral instructions don't change. The rules for how it handles a vulnerable moment, the framework for detecting what kind of conversation someone needs, the guidelines for when to ask a question and when to just listen — identical for every user, every message, every day. And I was paying to re-read all of it on every single API call.

Anthropic offers something called prompt caching. Mark a section of your instructions as cacheable, and Anthropic holds it in memory on their servers. The next call using the same instructions reads from cache instead of reprocessing from scratch. Cached tokens cost 90% less.

The implementation took one day. Split the system prompt into two blocks — the static instructions that never change, and the dynamic context that's unique to each user. Mark the static block for caching. Done.

The result: a 70% reduction in API costs, immediately. The 17,000 tokens of behavioral instructions I'd been paying full price for on every call now cost a fraction of a cent per message. As long as any user has talked to kAI in the last five minutes, the cache stays warm for the next call. During active hours, it's almost always hot.

Seventy percent. From one day of work and one line of code.

Fix #2: Teach kAI to Remember Without Re-Reading Everything

kAI is designed to be a long-term companion — someone you talk to for months, even years. Without any compression, a user who chats daily for a year might generate thousands of messages. Sending all of that raw history on every call would cost roughly $0.66 per message and eventually break entirely — even the largest AI models have limits on how much they can hold at once.

The solution was a tiered memory system modeled on how human memory actually works.

At the end of each day, a summarization engine compresses that day's raw conversation — maybe 50 messages and 15,000 tokens — into a few sentences and a paragraph. About 200 tokens. That's a 98% compression ratio with the important context preserved. Daily summaries roll up into weekly summaries. Weekly into monthly. Monthly into quarterly. Annual summaries capture the arc of the full year.

A user who's been talking to kAI every day for a year generates about 10,000 tokens of compressed memory instead of 5.5 million tokens of raw history. The summaries are generated by Claude Haiku — Anthropic's fastest, cheapest model — so the cost of creating them is minimal. The user who's been with Kenektic for two years doesn't cost dramatically more per message than someone who signed up last week. That's what makes a lifelong companion commercially viable, not just technically possible.

Fix #3: Smarter Search Through the Research Library

kAI has access to over three thousand sources on loneliness, friendship formation, and human connection. When a conversation touches on grief, or the loneliness of retirement, or social anxiety after a divorce, kAI searches that database and surfaces the most relevant research. This is called RAG — Retrieval-Augmented Generation. Instead of cramming everything into the AI's instructions, you store knowledge externally and retrieve only what's relevant for each specific moment.

To search that database, you need an embedding model — a system that converts text into long lists of numbers called vectors. Text with similar meaning ends up with similar numbers, even if the words are completely different. "I've been feeling isolated since the move" and "It's lonely being the new person in town" would produce nearly identical vectors.

I'd been using OpenAI's embedding model. It worked — but it was producing 1,536 numbers per piece of text, which meant heavy storage, slower searches, and a second vendor relationship alongside Anthropic.

I switched to a product called Voyage AI. Their model produces 1,024 numbers instead of 1,536 — 33% smaller — which means less storage and faster searches across every database table. More importantly, Voyage AI treats storing content and searching for it as different operations, encoding each in a way that's optimized for how they'll be used. A 2,000-word research paper and a ten-word conversational query are very different kinds of text. The model knows that.

Retrieval quality improved. Cost went down. One less vendor to manage.

Fix #4: Stop Fetching What Should Just Live in the Brain

The psychology training I wrote about a few weeks ago — the eight conversational techniques, the loneliness intelligence frameworks, the emotional support patterns — I'd considered storing all of it in the external database and retrieving it dynamically.

That would have been a mistake.

kAI needs those techniques on every single message. Conversation type detection, emotional labeling, validation intensity matching — these aren't situational. They're kAI's baseline. Retrieving them via database search on every call would be like a chef looking up how to hold a knife before every cut. The lookup cost compounds fast, retrieval is never fully guaranteed, and you're adding latency to every response.

Instead, I baked all of it directly into the static system prompt — the block that's now cached at a 90% discount. The behavioral training costs almost nothing to carry because it rides along with the cache. Always there. Always guaranteed. Zero retrieval lag.

What the Numbers Look Like Now

Before these four changes, a single active user having ten conversations a day could cost roughly $0.66 per message. Multiply that across a thousand university students and the economics fall apart entirely.

After: approximately $0.02 per message. The same thousand students cost a fraction of what they would have. Margins that make an annual university pricing model not just possible, but genuinely sustainable.

A week later I had the answer — and the answer turned out to be less "panic" and more "learn some things and fix them."

Next week I want to talk about what that learning process actually looked like. None of this came from a computer science background. It came from reading documentation, asking questions, and refusing to be intimidated by terminology I'd never heard before. That story feels worth telling on its own.

Have you experienced something similar? Have you ever found an expensive problem in your business and discovered the fix was more accessible than the problem made it seem? That gap between "this is terrifying" and "this is actually solvable" can close faster than you'd expect. I'd genuinely love to hear your story.

Kenektic is in development and will launch soon. If you want to be notified when we're ready, or if you want to share your story with me directly, reach out at hello@kenektic.com.

Coming Next: The Finance Guy Who Learned to Think Like an Engineer — This week was about the money. Next week is about what it actually took to understand and implement the architecture behind it — and why a non-technical founder understanding his own systems at a granular level turned out to matter.